Stay ahead with insights on artificial intelligence in education. Discover tools, strategies, and trends to enhance teaching and learning.

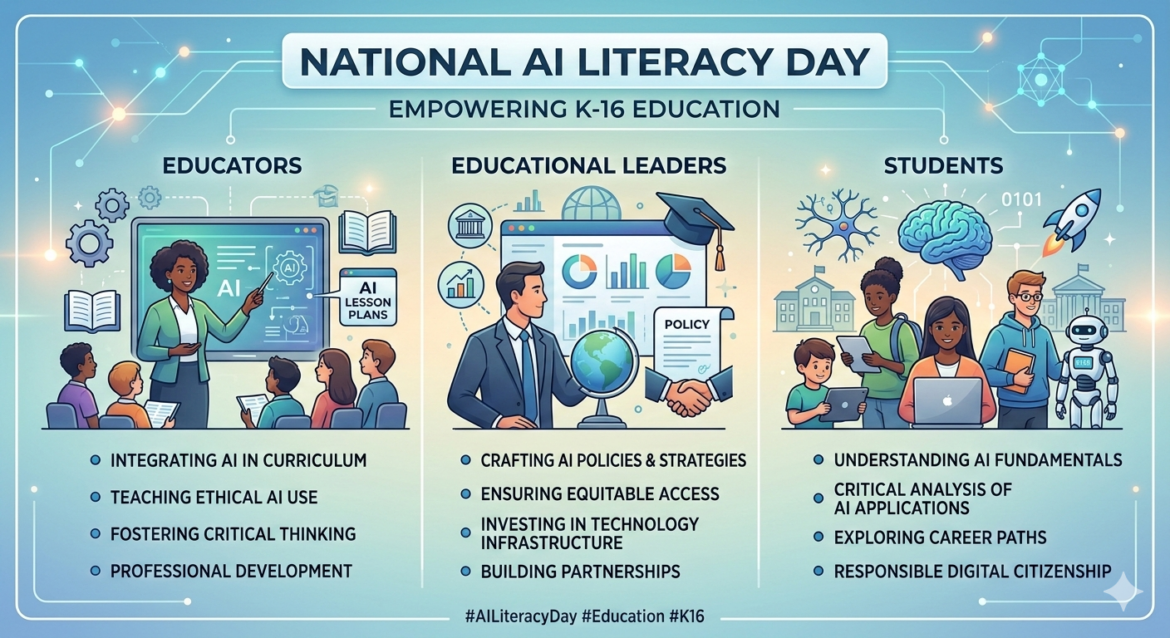

Texas has 5.5 million K-12 students (2024-2025). During the 2024-25 school year, 85% of teachers and 86% of students nationally used Gen AI, and 52% of Texas teachers used AI tools specifically for math instruction. These stats are a signal that educators are curious, motivated, and moving on AI adoption. The adoption is real, and it is happening in classrooms now.

Did You Know? You can join the TCEA Community’s “All About AI” and get regular updates about Gen AI, how it affects education, nonprofits, and businesses via the AI Class Notes publication. Note: To get access to the Generative AI Adoption Checklist featured in this blog entry, you will need the password in the Comments section of this Community post on 4/10/2026 (Issue #2). What could you have done with this information a week ago?

The opportunity that creates is significant. 31 states have already issued formal K-12 AI policy, which means there are frameworks to learn from, adapt, and improve on. Districts that build thoughtful guidance now get ahead of the confusion rather than reacting to it later.

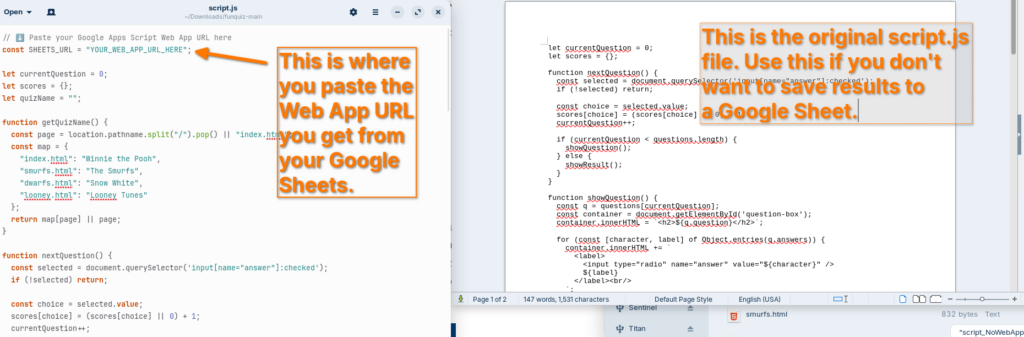

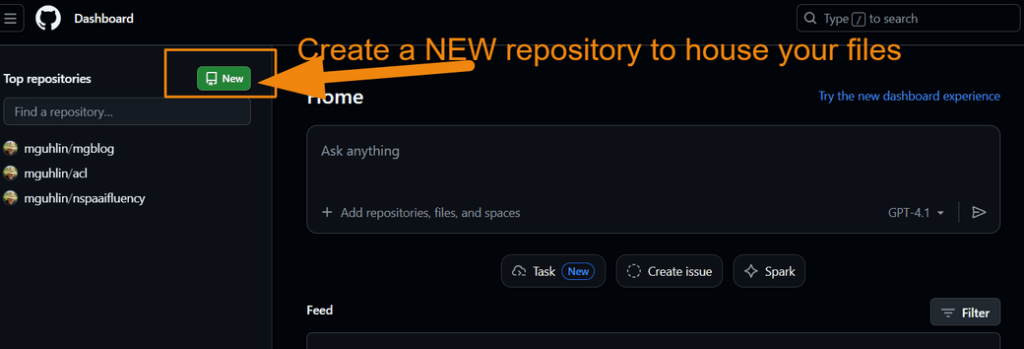

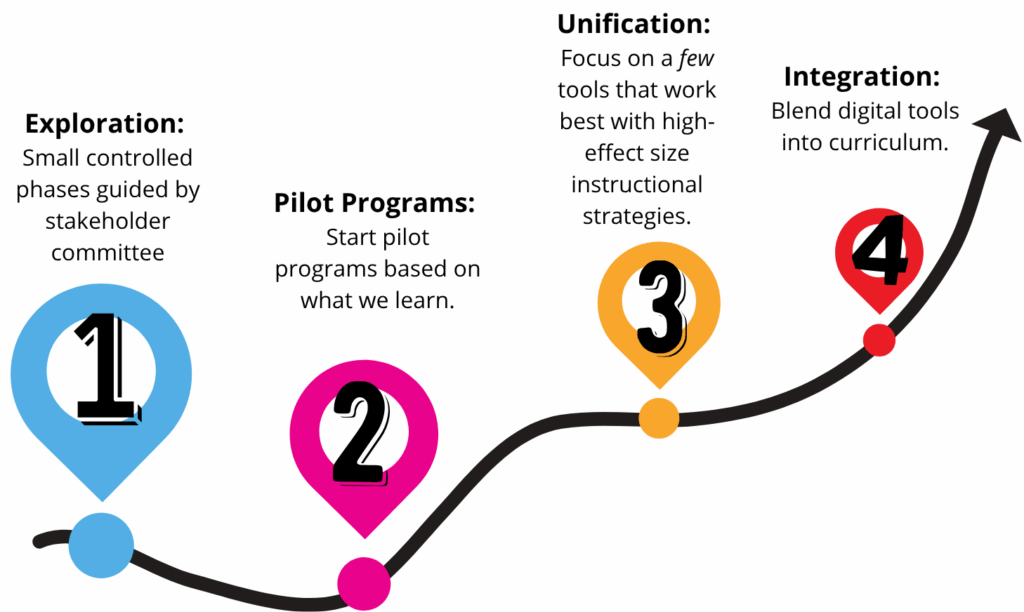

An Interactive Roadmap

Before we get too far along on the conversation, here’s an interactive roadmap (short version) you may want to explore to see the journey your stakeholder committee may need to embark on. It can help you get ahead of the confusion.

So, how did you do? What’s your campus’/district’s overall readiness? Now that you have a better picture, continue reading.

Closing the Gap

The gap most districts face is not a lack of enthusiasm. Rather, it is the space between teachers who are already using Gen AI and institutions that have not yet caught up with clear expectations, data guardrails, and professional development to match.

If my superintendent asked in March. “Where are we on AI?” I would have had something cooking right away, bringing stakeholders together. It’s like when my superintendent said, “Let’s roll out BYOD at the high school.”

Sure, you can roll something out fast, but are you rolling it out the right way? The right way is more than a policy guide with a title, a table of contents, and a lot of words. It has to be built on community wisdom of the people in your space.

That gap is one you can bridge (unless you, or more likely school leaders sensitive to politics, are afraid of conversations).

A Checklist to Deepen the Conversation

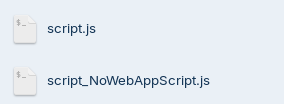

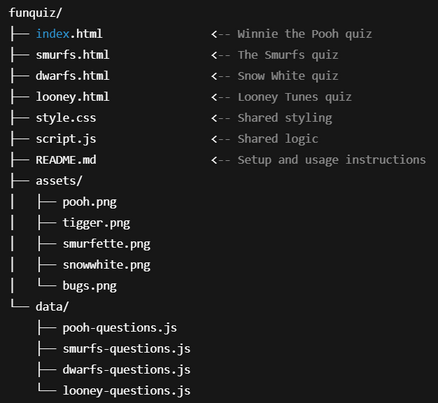

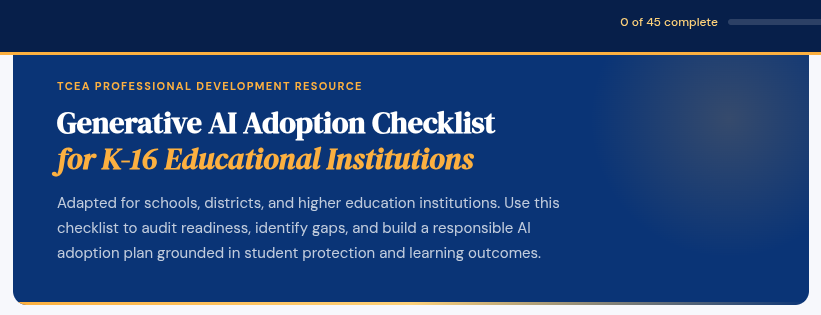

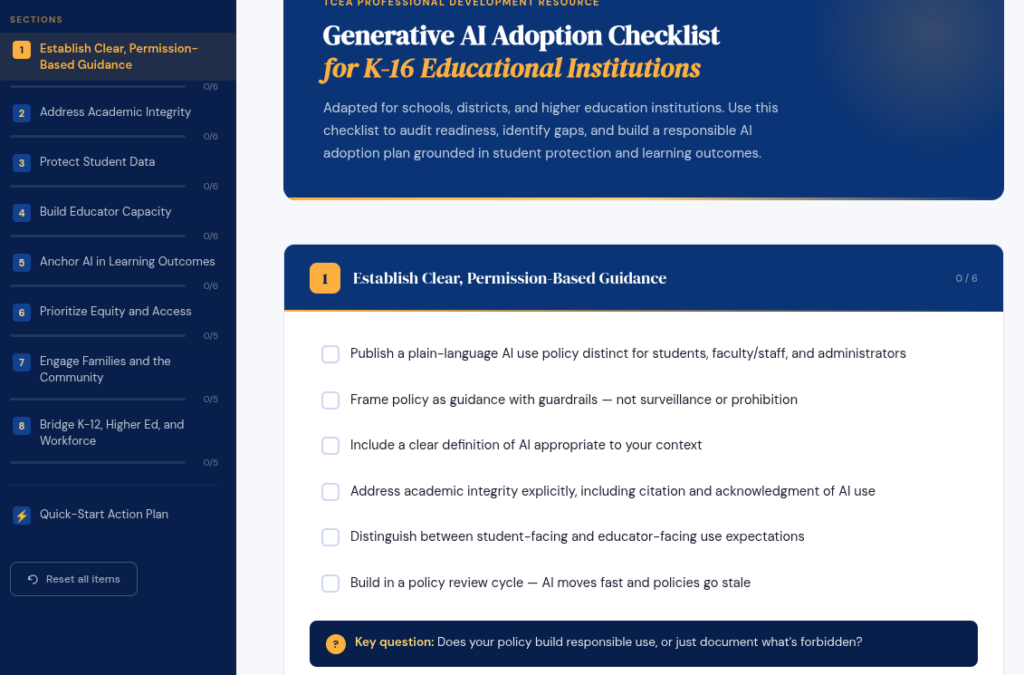

The interactive Generative AI Adoption Checklist for K-16 Educational Institutions gives you a structured way to see exactly where you stand. Only 45% of principals nationally report having any school or district AI policy in place, and more than one in five Texas teachers said their district provided no training on the tools they were already using. The checklist covers 46 items across eight areas, works entirely in your browser, and saves your progress automatically. No account. No download. No license.

What the Checklist Covers

The eight sections map to the decisions that actually keep CTOs and curriculum directors up at night. They are not theoretical. Each one connects to a real gap that tends to appear when districts try to move from “we should do something about AI” to “here is what we expect.”

| Section | Core Question |

|---|---|

| Establish Clear Guidance | Does your policy build responsible use, or just document what’s forbidden? |

| Address Academic Integrity | Do students know exactly what is and isn’t allowed in each assignment? |

| Protect Student Data | Do staff know what student information cannot enter any Gen AI tool? |

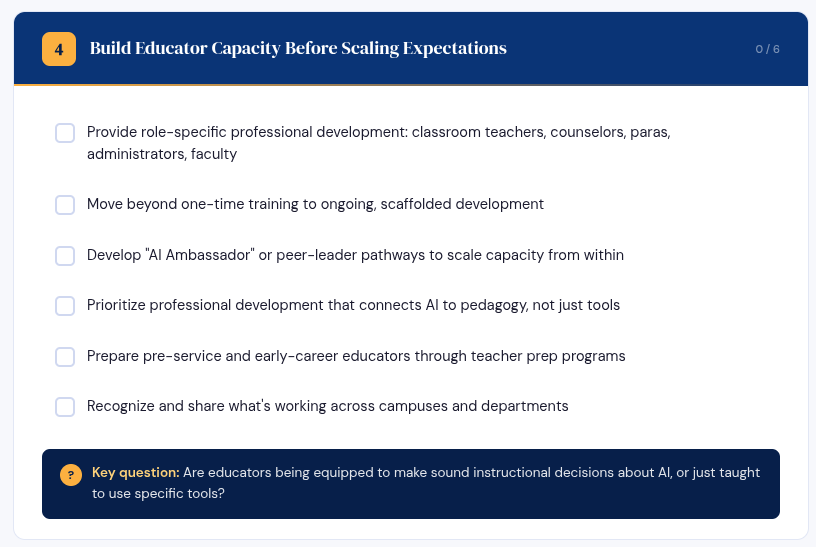

| Build Educator Capacity | Are educators being equipped to make instructional decisions, or just taught to use tools? |

| Anchor AI in Learning | Would this use of Gen AI produce better-prepared learners, or shortcuts? |

| Prioritize Equity and Access | Who benefits from your adoption, and who gets left further behind? |

| Engage Families | Do families feel informed and included, or surprised by what they eventually learn? |

| Bridge K-12 to Workforce | Are your students graduating into environments where their Gen AI skills will hold up? |

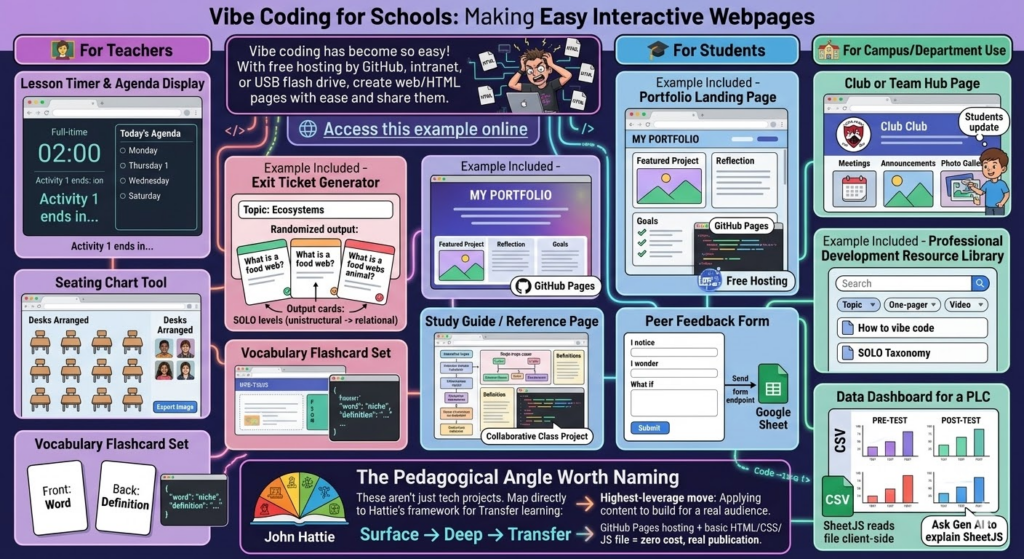

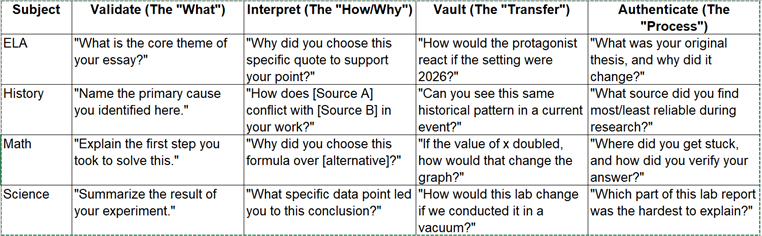

Use this to frame your review of your campus or district AI plan or CIP/DIP (Improvement plans for campus/district) plan components. The question I ask most often is the one in section five: does this use of Gen AI deepen learning, or replace it? Most plans never get to that question. You can see this question pop up in teaching and learning, too:

How often do we get past Surface Learning strategies to Deep and/or Transfer Learning?

The answer for both questions is a simple “seldom.”

How It Works

Open the checklist in any browser. Check off items as you complete or confirm them. Your progress saves automatically to your browser, so you can return to it across multiple sessions without losing your place.

The sidebar shows your progress by section, and a progress bar at the top tracks your overall completion across all 46 items. Each section ends with a key question designed to surface honest conversation, not just box-checking.

There are no trick items. Each one reflects a decision your institution either has made, needs to make, or has been avoiding.

A Starting Point, Not a Finish Line

The checklist includes a Quick-Start Action Plan at the bottom. It is worth reading before you dive in, because it reframes the tool from a one-time audit to a staged process.

The “this week” item is the most useful one: review your current policy against the checklist and identify gaps. Avoid trying to fix them right away. Instead, simply name them. That alone is a conversation worth having with your team, your board, or your campus leadership.

Most organizations will find gaps in more than one section, and that’s OK for now. If it is still your situation in three to four months, you are moving too slow.

What This Is Not

This checklist is NOT a compliance document. One reason is that tt does not cite specific legislation, TEKS, etc. Privacy and data protection items in section three are written with FERPA and student records in mind. That aside, it does not tell you which Gen AI tools to approve or make the decisions for you. The intent is to assist you and a committee of stakeholders in making those decisions.

The goal is to give you a structured way to see what you have, what you are missing, and where the real risks are. It does this in the absence of state guidance. This checklist assists you in asking tough questions and getting answers from stakeholders. It’s important to do this before people knock on your door asking, “Why haven’t you done anything?” That’s one place no leader wants to be.

Open the Generative AI Adoption Checklist and spend 15 minutes on section one. See what you actually have in place. Note: To access the Checklist, you will need a password available via the Community post on 4/10/2026 (Issue #2) comments.