The use of AI tools for student learning is a somewhat controversial topic of discussion today. If students use it to brainstorm or create, are they actually learning? Or are they just copying and pasting the chatbot’s information and passing it off as their own. Dr. Philippa Hardman offers a different approach: inviting students to use AI to learn by teaching.

Research on Learning by Teaching with AI

Empirical evidence shows that the act of teaching others solidifies a student’s knowledge, fosters self-regulatory skills, and provides immediate diagnostic feedback. This is the basis of the powerful instructional strategy the Jigsaw Method. John Hattie found that this strategy has an effect size of 1.20, three times higher than the hinge effect size of 0.4. This makes it one of the most effective ways for students to learn.

The Teaching Others strategy has also been proven to have these benefits:

- Powerful Active Learning: When students know they will have to teach a concept, their engagement increases. Research (Benware and Deci, 1984) shows the mere expectation of having to teach something leads to deeper processing of material.

- Self-Regulated Learning: Teaching requires planning, monitoring, and reflecting, which aligns with Zimmerman’s (2002) findings on fostering lifelong learning skills.

- Immediate Feedback: Students learn more deeply when they receive instant signals about gaps in their own understanding (Okita and Schwartz, 2013).

What is learning by teaching?

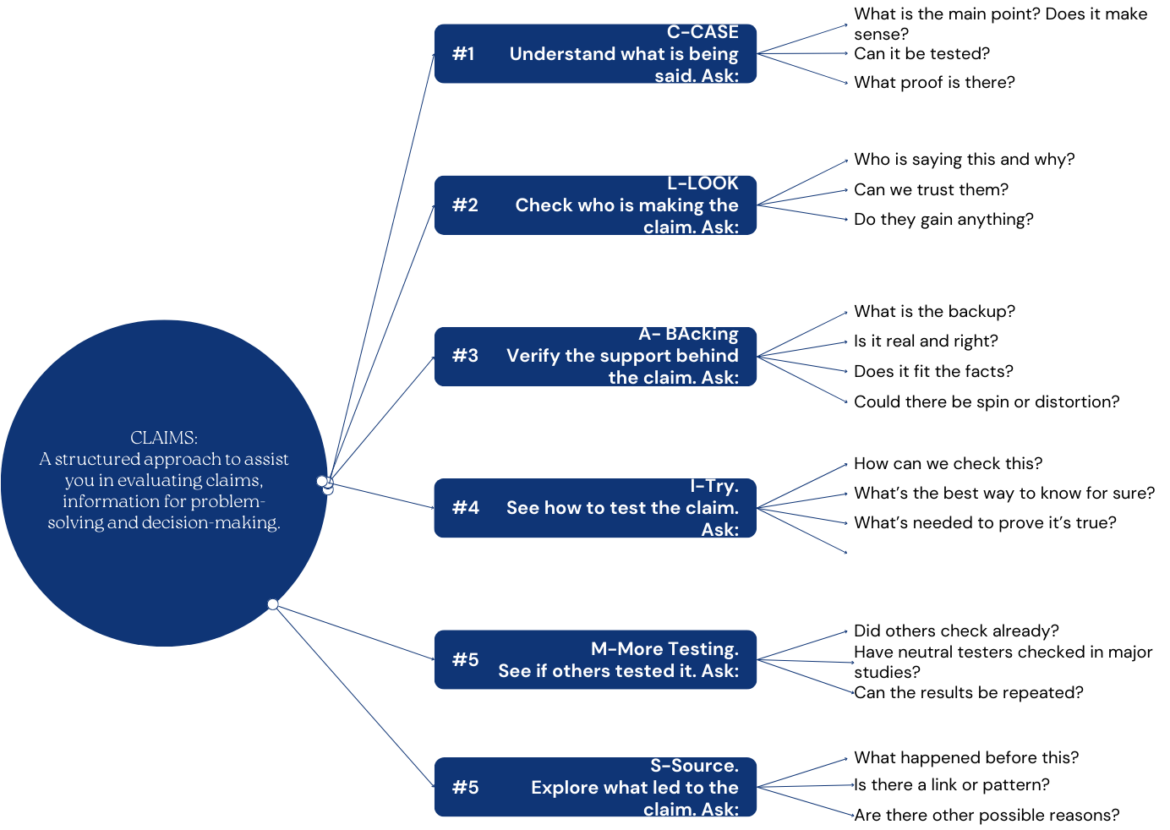

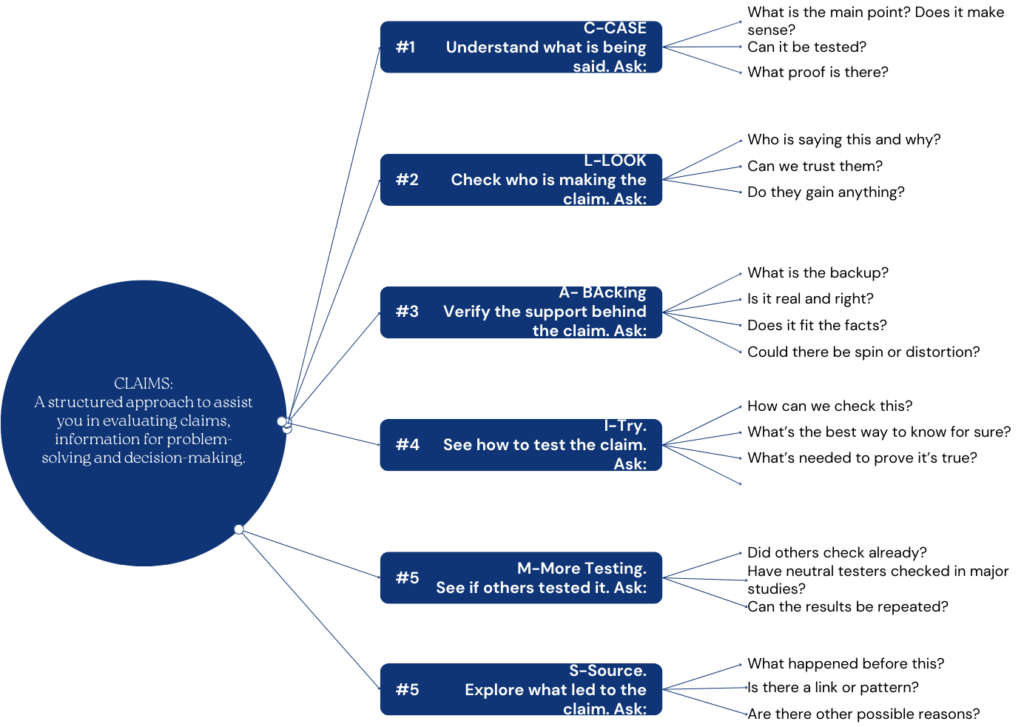

Learning by teaching is simply what happens when one student must become knowledgeable about a specific topic to be able to teach another student. Of course, there are some guidelines that must be taught first before students can be successful with this strategy. Below are some detailed, actionable steps for introducing “Teaching Others” in your classroom:

- Explain the Rationale

- What: Communicate that students will master content more effectively by teaching it.

- Why: Emphasize that teaching reveals gaps in understanding, prompting deeper learning.

- How: Provide examples (e.g., brief student-to-student explanations, micro-teaching sessions).

- Model the Process

- Demonstrate: Show how you break down a topic into key ideas, check for clarity, and adapt your explanation based on a learner’s feedback.

- Use Think-Alouds: Verbalize your thought process when planning an explanation—where you begin, how you check if the “learner” is following, and how you handle confusion.

- Set Up Structured Activities

- Peer Teaching: Pair or group students. Assign each person a subtopic and have them teach the others.

- Jigsaw Method: Divide a larger concept into chunks. Each student becomes an expert in one chunk, then teaches the rest of the group.

- Feedback Loops: Incorporate time for student-teachers to ask: “What didn’t make sense?” This fosters immediate clarification and deeper insight.

- Use Guiding Tools

- Checklists or Scripts: Provide a structured format students can follow when explaining content (e.g., “Introduction → Key Points → Examples → Questions → Summaries”).

- Reflection Prompts: After teaching, ask students to reflect: “What questions did you get? What was hardest to explain? What would you clarify next time?”

- Assess and Reflect

- Peer/Self-Assessment: Offer rubrics for both the “teachers” and “learners” to assess clarity, engagement, and understanding.

- Ongoing Improvement: Encourage students to note where they felt uncertain or where their explanations fell short, then give them a chance to re-teach.

Integrating AI into Learning by Teaching

While some students have learned to use AI to do work for them, this tool can also be effectively used to help them learn content by embracing the idea of the student “teaching” the chatbot. Below are steps to help you embed AI into a teaching-to-learn model:

- Set Clear Guidelines

- Explain the Role of AI: Frame the AI as a “practice student.” Students should teach it step by step, anticipating mistakes.

- Define Expectations: Students must ask the AI questions, check its understanding, and correct it when it errs. Remind them that the AI might not always respond like a human learner.

- Structure AI Teaching Sessions

- Assess the AI’s “Knowledge”: Have students ask targeted questions to see what AI “already knows.”

- Plan Explanations: Before each session, have students create a mini-plan of how they will teach the concept (e.g., definitions, examples, practice prompts).

- Guide and Monitor: Students should carefully observe the AI’s responses for inaccuracies or superficial understanding. Encourage them to probe deeper with follow-up questions.

- Encourage Iterative Feedback

- Correct Misconceptions: If the AI displays incorrect answers, students must identify the error and explain the correct reasoning.

- Reflect on Gaps: Ask students to note any point where the AI’s response is “too perfect” or changes “persona.” Discuss how these inconsistencies affect the teaching process.

- Promote Self-Regulated Learning

- Plan → Teach → Reflect Cycle: Encourage students to plan an explanation, carry it out with the AI, then reflect on what went well and what needs improvement.

- Debug and Retry: If the AI’s answer is correct too quickly, challenge students to dig deeper or choose a more complex subtopic. If the AI is incorrect, practice “debugging” the AI’s misconceptions.

- Document and Share

- Track Interactions: Have students keep a log of what they taught the AI and how the AI responded. This helps them see their own progress and recurring misunderstandings.

- Discuss in Class: As a group, share successful teaching moves and highlight areas where the AI was hard to “teach.” Use these discussions to improve collective teaching strategies.

Tips for Maximizing Impact

- Introduce Controlled Complexity

- Start Simple: Use AI for clear-cut, lower-level tasks or concepts.

- Scale Up: As students become comfortable, move to more abstract or complex topics that force deeper explanations.

- Maintain Realism

- Acknowledge AI Limitations: AI might not display truly “human” confusion. Remind students that real learners show partial understanding and might need repeated explanations.

- Supplement with Real Peers: Balance AI-based teaching with real peer-tutoring experiences for richer feedback and authentic misconceptions.

- Leverage Reflection for Growth

- Celebrate “Teachable Moments”: When the AI produces unexpected output, encourage students to refine their own thinking and explanations.

- Encourage Metacognition: Have students articulate what strategy they used to clarify confusion and how they adjusted for the AI’s strengths or weaknesses.

By weaving these actionable steps into your classroom practice, you can harness the robust research behind Teaching Others and capitalize on new AI possibilities, enabling your students to learn more deeply, think more critically, and grow more confident in their own knowledge.