If you’ve ever led professional development in a school district, you know the frustration: you spend weeks planning, teachers show up, they nod along, they say nice things on the feedback form, and then nothing changes in classrooms. While feedback forms and attendance sheets are easy to collect, they rarely tell you if teachers are using what they learned or if it’s helping students.

The Kirkpatrick Model offers a proven way to go beyond surface-level feedback and truly assess the impact of your PD efforts. In this post, you’ll get a practical breakdown of all four Kirkpatrick levels, with a special focus on Level 3: Behavior, plus concrete tools you can use immediately.

Why This Matters

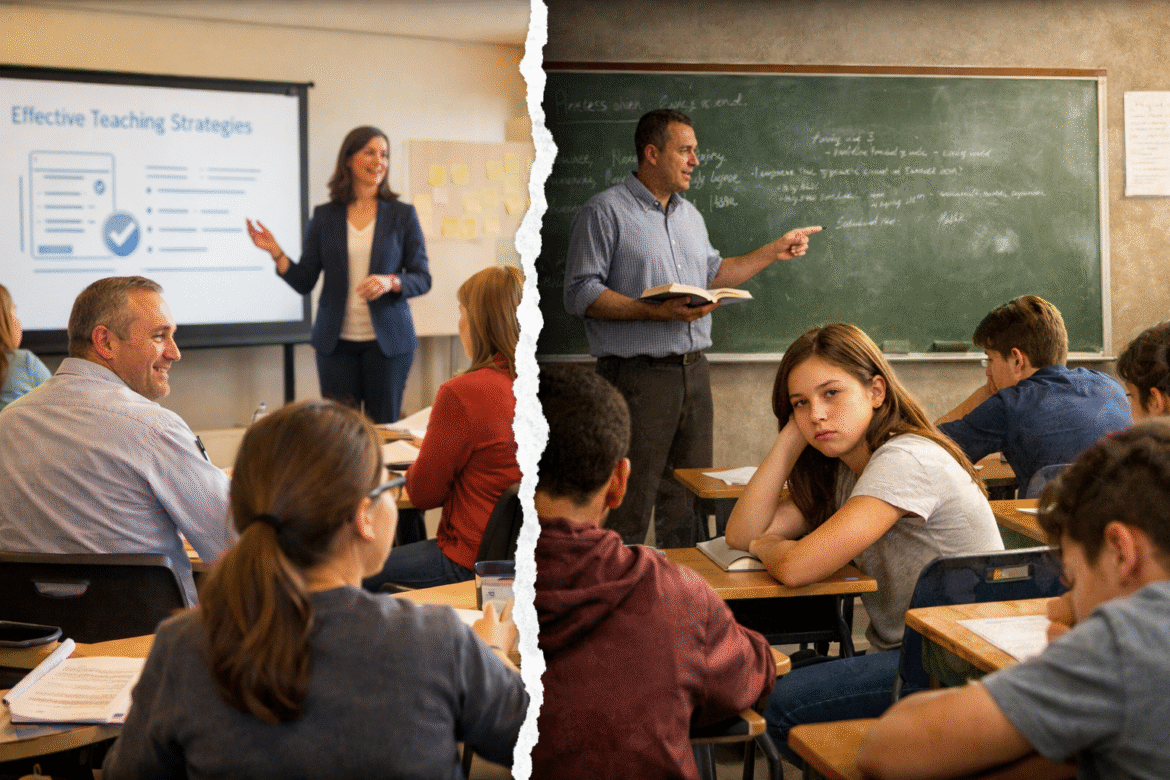

School districts invest enormous resources in professional development, yet research consistently shows that most PD fails to change classroom practice. The problem isn’t that teachers don’t want to improve. The problem is that most districts measure the wrong things: satisfaction instead of implementation, understanding instead of application. We stop gathering data right when the real work begins.

A Quick Primer: What Is the Kirkpatrick Model?

The Kirkpatrick Model is a widely used framework for evaluating training effectiveness that works just as well in education as in corporate settings.

Here’s the snapshot:

Level 1: Reaction – How did participants feel about the training?

Level 2: Learning – What knowledge or skills did they gain?

Level 3: Behavior – Are they applying what they learned in real-world situations?

Level 4: Results – What measurable outcomes resulted from the training?

In school districts, the Kirkpatrick Model helps you move beyond attendance and satisfaction to ask the questions that matter: Are teachers using what they learned? Is it improving instruction? Are students benefiting?

The Most Common Missteps in Schools

Even with the best intentions, school-based PD often falls short. Here are scenarios that play out in districts everywhere:

The Satisfaction Trap: Picture this: A district invests in a full-day workshop on differentiated instruction. The post-survey shows 92% satisfaction. Teachers complete a knowledge check showing they understand the concepts. Everyone considers it a success. Three months later, classroom observations reveal that differentiation strategies aren’t being used. What went wrong? They stopped measuring at Level 2 and never checked if anything changed in practice.

The Forgetting Factor: Here’s what typically happens: Districts collect beautiful post-session surveys showing 95% satisfaction and strong knowledge gains. But when classroom visits happen three weeks later, only a small fraction of teachers have tried any of the strategies. The others had genuine intentions but got swept back into their routines. Without follow-up measurement, districts have no idea their investment has essentially disappeared.

The Misalignment Problem: Teachers attend PD on student-centered learning, but the evaluation asks generic questions about “session quality” and “presenter effectiveness.” There’s no connection between what the training promised and what gets measured, making it impossible to improve future sessions.

The 4 Levels in Action (With Emphasis on Level 3)

Level 1: Reaction – Did They Like It?

Use quick surveys or exit tickets to assess how teachers felt about the PD. This level is useful for immediate feedback but tells you almost nothing about whether practice will change.

Level 2: Learning – Did They Learn It?

Use short pre/post assessments or reflective prompts to check for knowledge gains. But here’s the thing: knowledge doesn’t automatically translate to action. A teacher can perfectly explain formative assessment strategies and never use a single one in their classroom.

Level 3: Behavior – Are They Using It?

This is the most critical level and often the most overlooked. This is where you find out if your PD actually changed anything.

Here’s how to gather real evidence:

- Review coaching logs from classroom visits

- Collect teacher reflections 2-3 weeks after the session

- Use targeted walk-throughs with focused “look-for” checklists

- Track participation in follow-up structures like practice partnerships

For example, after a workshop on discussion protocols, observe whether teachers are using accountable talk stems, check if students are speaking in more extended turns, or review video clips teachers submit. You’re looking for evidence that the strategy moved from the training room into actual instruction.

The challenge is that Level 3 requires patience and infrastructure. You need systems for follow-up, tools for observation, and commitment to tracking implementation over weeks, not hours. While it is phenomenal to reach be able to succeed at level 4, consider level 3 your target focus.

Want help writing follow-up questions? Use this AI prompt:

"Help me understand Kirkpatrick Level 3 evaluation questions. Then give me 10 questions at this level for a [Topic] training to measure how teachers are applying skills in their job."For a formative assessment training, AI might suggest: “How often have you used exit tickets since the training session?” or “What changes have you made in how you check for understanding?”

Level 4: Results – Did It Make a Difference?

Here, you’re connecting training to student outcomes: improved benchmark scores, increased engagement, fewer behavior referrals. Even small shifts count. You’re not trying to prove causality in a research sense. You’re looking for impact signals that suggest your PD investment is moving the needle.

Tools You Can Use Tomorrow

I’ve created two companion resources that work hand-in-hand with this framework:

Better Evaluation Questions Template gives you research-backed alternatives to traditional “smile sheets,” including self-efficacy questions (the strongest predictor of implementation), utility questions that force concrete commitment, and complete sample forms for immediate and follow-up evaluation.

Level 3 Assessment Indicators Checklist is your monthly monitoring tool with 50 specific indicators across five categories: teacher behaviors, conversation quality, artifacts of learning, request patterns, and continuous learning structures. Use it at key checkpoints to see if implementation is growing, stable, or declining.

Both resources are designed to work together: use the question template to gather data, then use the checklist to synthesize what you’re observing.

Your Next Step

Measuring PD impact doesn’t have to be overwhelming, but it does require a shift in mindset. Instead of asking “Did they like it?” start asking “Are they using it?” and “Is it making a difference?”

Here’s what to do this week: Pick your next scheduled PD session. Write down one specific observable behavior you want to see in classrooms within 2-3 weeks. Then work backward: What will teachers need to learn? What will you look for during classroom visits? What questions will tell you if it’s working?

Use the evaluation question template to draft your follow-up survey, and put the Level 3 checklist on your calendar for a month out. The templates give you the questions. The checklist gives you the observation framework. The Kirkpatrick Model gives you the logic. Now it’s your turn to finally answer: Is our PD actually working?